- Natural Language Processing / Generation

- LLMs Alignment-Evaluation

- Conversational Agents

- Cognitive Psychology

- Physiological Sensing

- Behavioral Tracking

- User Interfaces

PhD in NLP-HCI-Psychology

University of Amsterdam, the Netherlands

MSc in Optimization and AI (NLP)

Heidelberg University, Germany

BSc in Computational Mathematics

XiDian University, China

Human Understanding

Using language, behavioral and physiological sensing to understand how people perceive AI/LLMs output.

LLM Alignment

Aligning LLMs or AI systems with human needs and domain-specific expertise.

Human-AI Coupling

Designing interventions and UIs for trustworthy Human-AI Coupling and in turn, to augment humans.

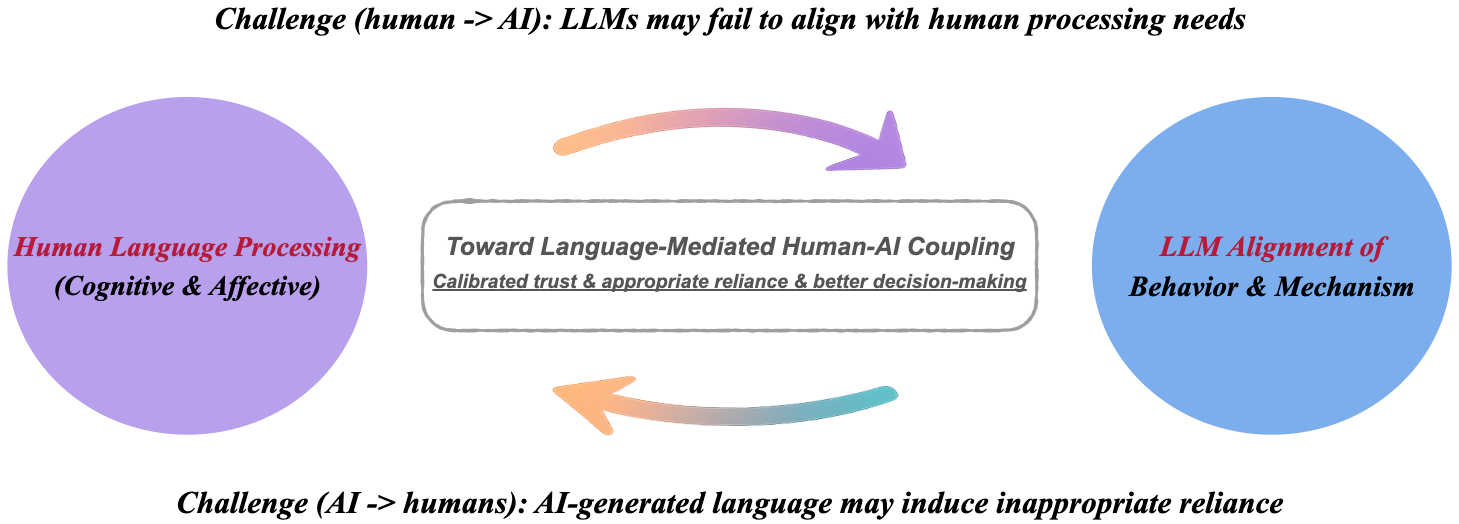

My current direction focuses on human-AI reciprocal alignment and bidirectional coupling that 1) LLMs can align with human processing needs; 2) AI-generated language can support humans with appropriate reliance.

Two full papers were accepted to ACL 2026 (One Main, One Findings) in San Diego, USA.

One full papers were accepted to ACM UMAP 2026 in Gothenburg, Sweden.

A full paper and a poster were accepted to CHI 2026 in Barcelona.

New work was accepted by IJHCS and CSCW 2026.

A VR and AI project was selected for first-round incubation funding from the Wellcome Trust AI Accelerator.

A full paper and an LBW contribution were accepted to CHI 2025 in Yokohama.

See All

Ongoing publications and presentations across COLING, CSCW, ICMI, and leading HCI venues.